Duplicate Remover

Find and remove duplicate lines, words, or characters in your text. The tool processes everything locally in your browser.

Tips:

- Use Lines mode to remove duplicate lines from lists or data

- Use Words mode to create a list of unique words in a text

- Use Characters mode to remove duplicate characters

- Use Consecutive mode to remove only characters that repeat consecutively

- Toggle Show Duplicates Only to see what items were removed

- Disable Case sensitive to treat "Word" and "word" as the same item

How to Use the Duplicate Remover

- Enter or paste your text in the input area

- Select what you want to deduplicate (lines, words, or characters)

- Configure additional options like case sensitivity

- View the result in the output area

- Use the "Show Duplicates Only" option to identify what was removed

- Use the copy button to copy the result

About Duplicate Removal

Duplicate removal is an essential text processing operation that helps clean up data by eliminating redundant information. This process is particularly useful when working with large datasets, lists, or any text that might contain repeated elements.

Common use cases for duplicate removal include:

- Cleaning up email lists or contact information

- Removing redundant entries from databases or spreadsheets

- Eliminating duplicate lines in code or configuration files

- Preparing data for analysis by ensuring each data point is counted only once

- Creating lists of unique words from a text for vocabulary analysis

- Removing redundant information to reduce file size

Our Duplicate Remover tool offers several deduplication methods:

- Line deduplication - Removes repeated lines while preserving the order

- Word deduplication - Eliminates repeated words within the text

- Character deduplication - Removes consecutive repeated characters

Additional features like case sensitivity options and the ability to view only the duplicates make this tool versatile for a wide range of text processing needs.

More Tools

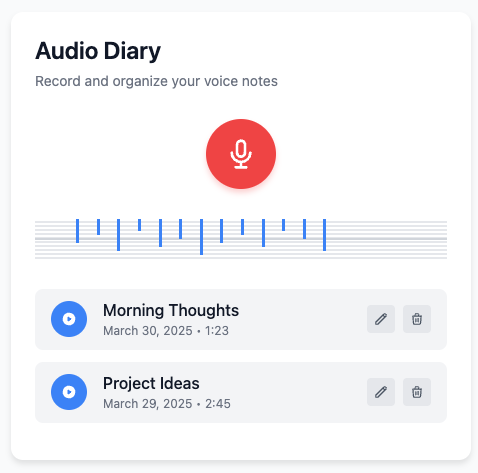

Audio Diary

Record and organize voice notes with this simple audio diary that stores everything locally on your device.

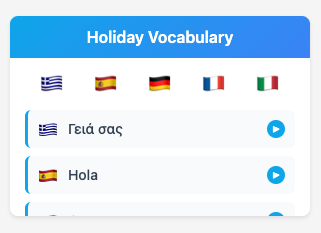

Holiday Vocabulary

Learn essential travel phrases in multiple languages with pronunciation guides for your vacation.

Math Solver

Solve basic math equations and expressions with detailed step-by-step explanations.

Todo List

Organize tasks with drag-and-drop reordering and track your progress with this simple todo list tool.

Shopping List

Keep track of items you need to buy with this simple shopping list tool that remembers what you've purchased.

Text Operations

A collection of 27 text manipulation tools for formatting, transforming, and analyzing text content.

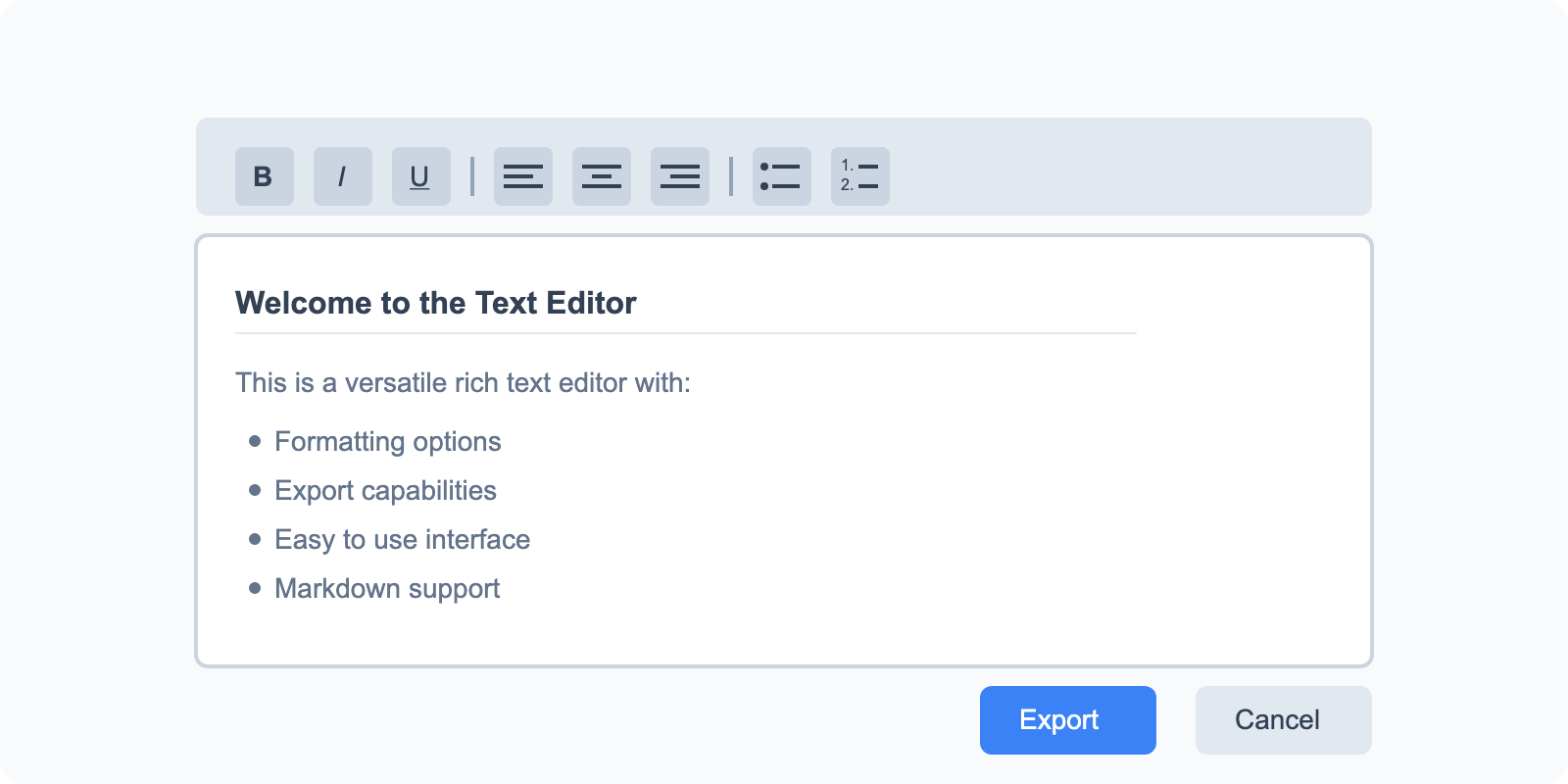

Text Editor

A versatile rich text editor with formatting options and export capabilities.

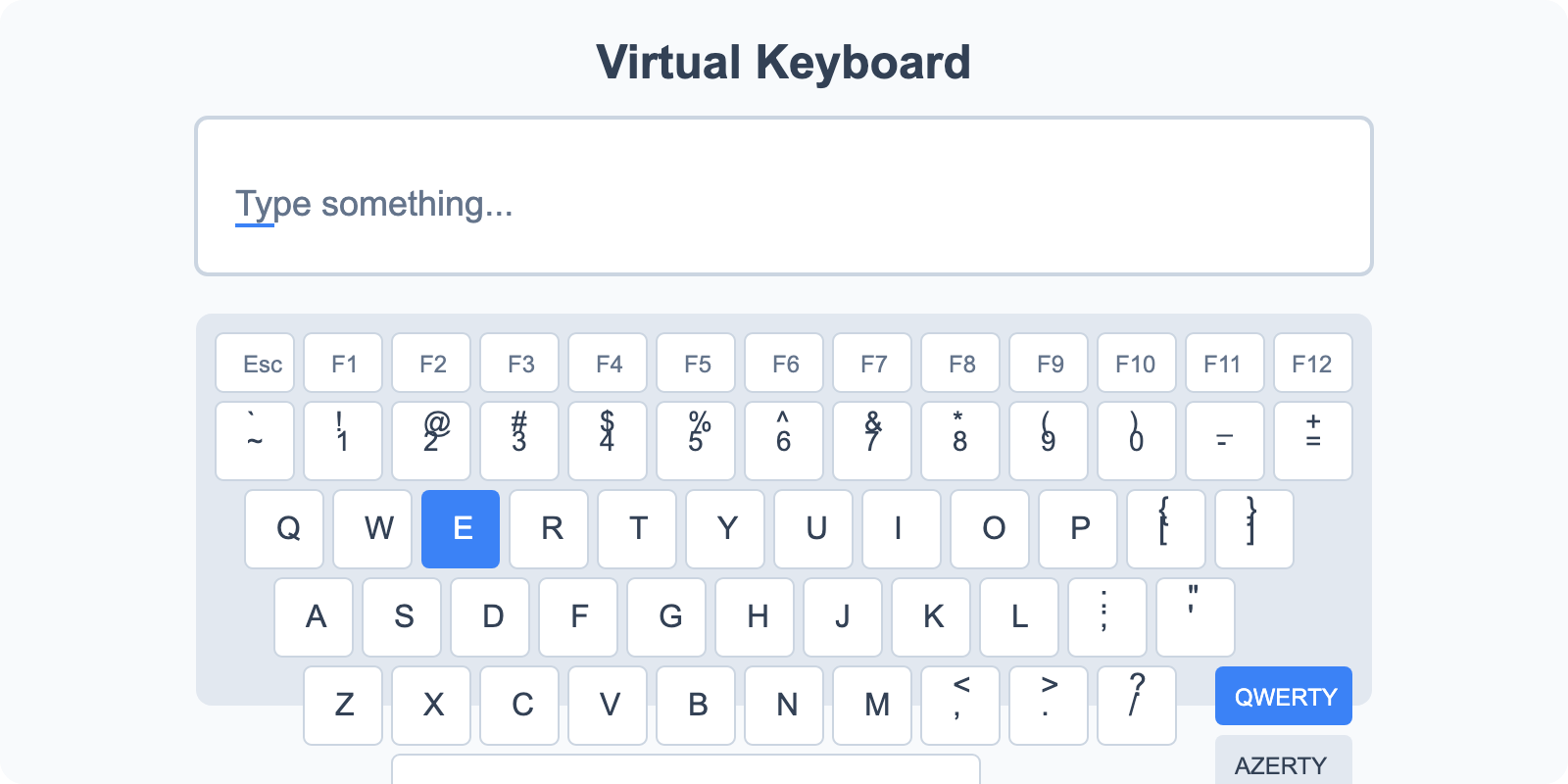

Virtual Keyboard

Type in different languages with multiple keyboard layouts.

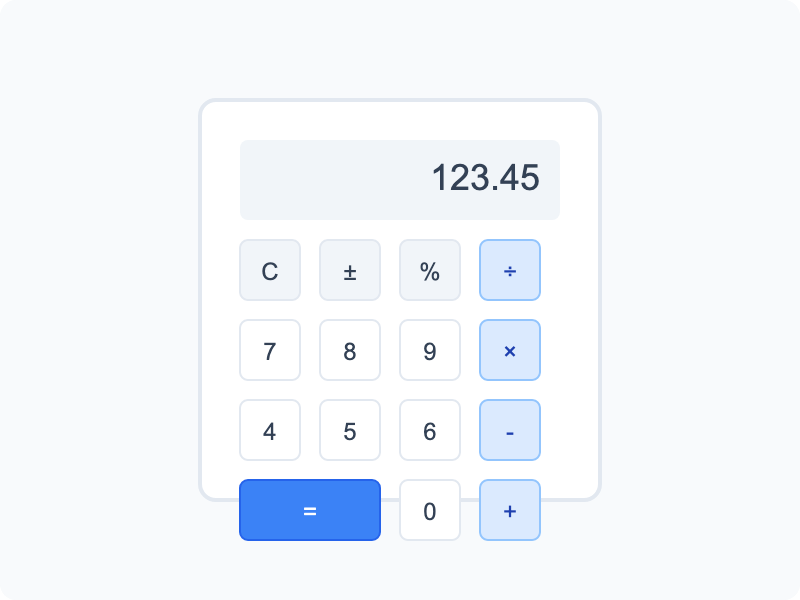

Calculator

Basic calculator and unit conversion tools for everyday calculations.

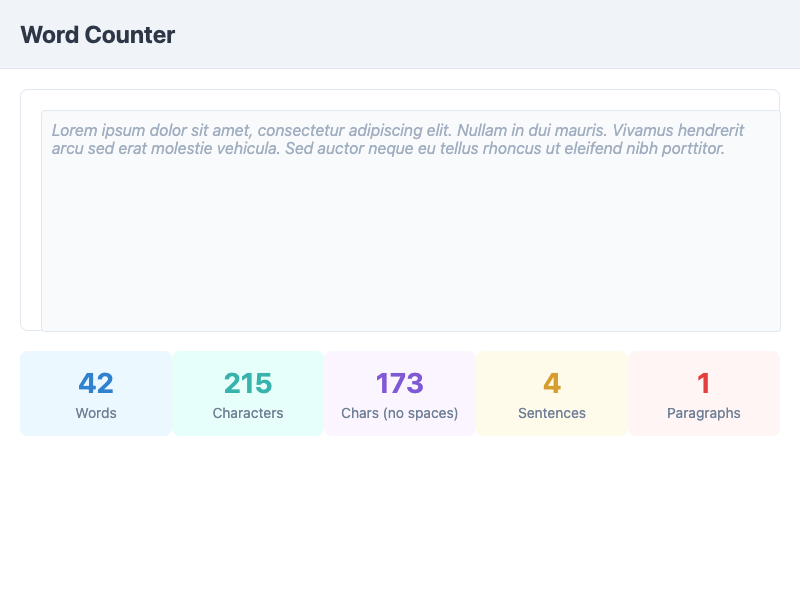

Word Counter

Count words, characters, sentences, and paragraphs in your text.

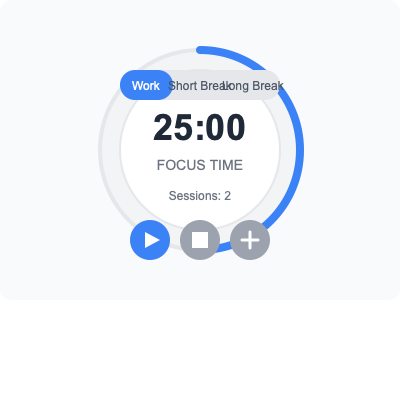

Pomodoro Timer

Boost productivity with timed work and break intervals using the Pomodoro Technique.

IP Address Lookup

Check your public IP address and view related location information.

Image Color Picker

Upload images and pick colors directly from them.

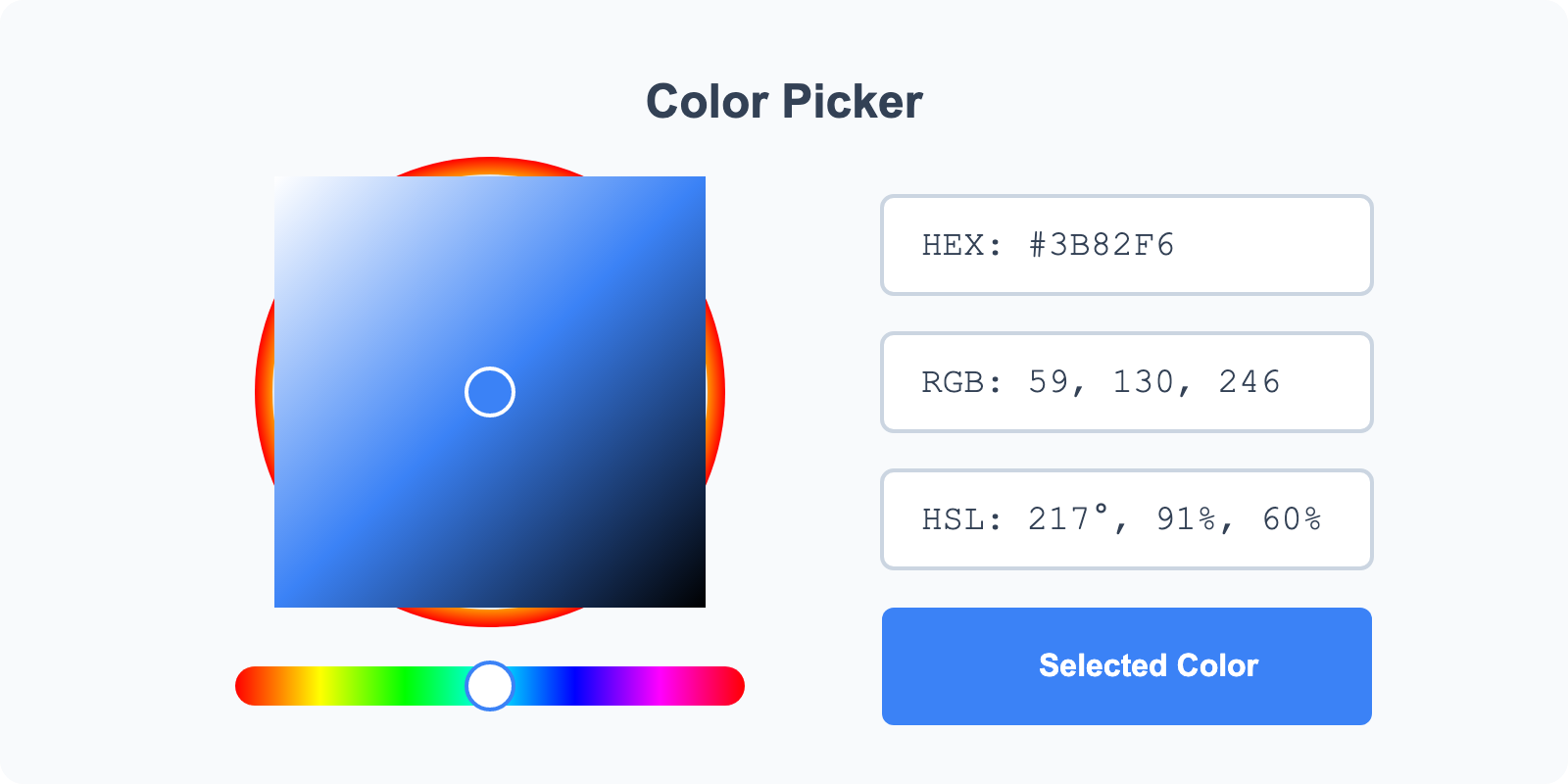

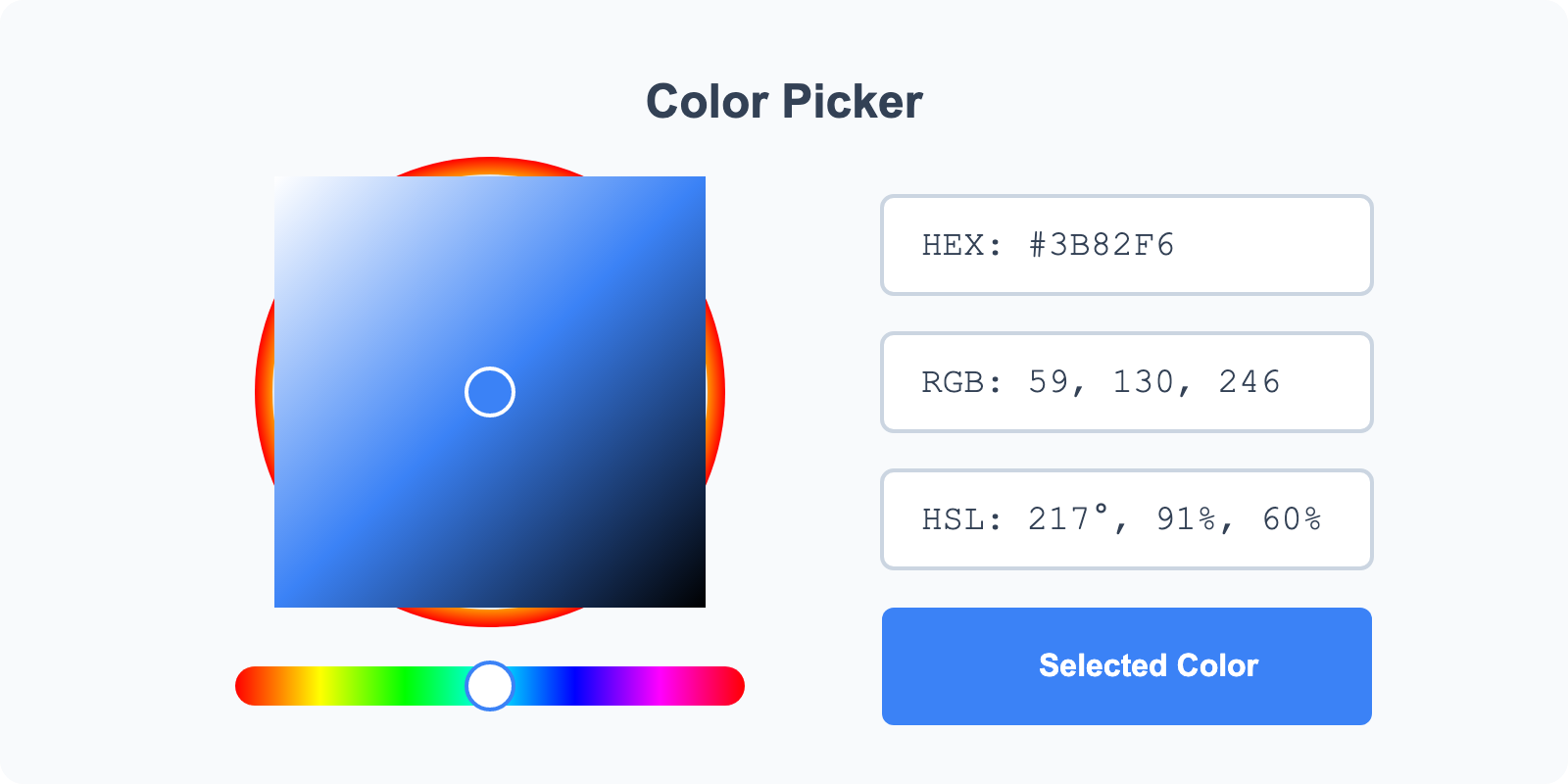

Color Selector

Select colors using RGB, HEX, or HSL pickers and create palettes.

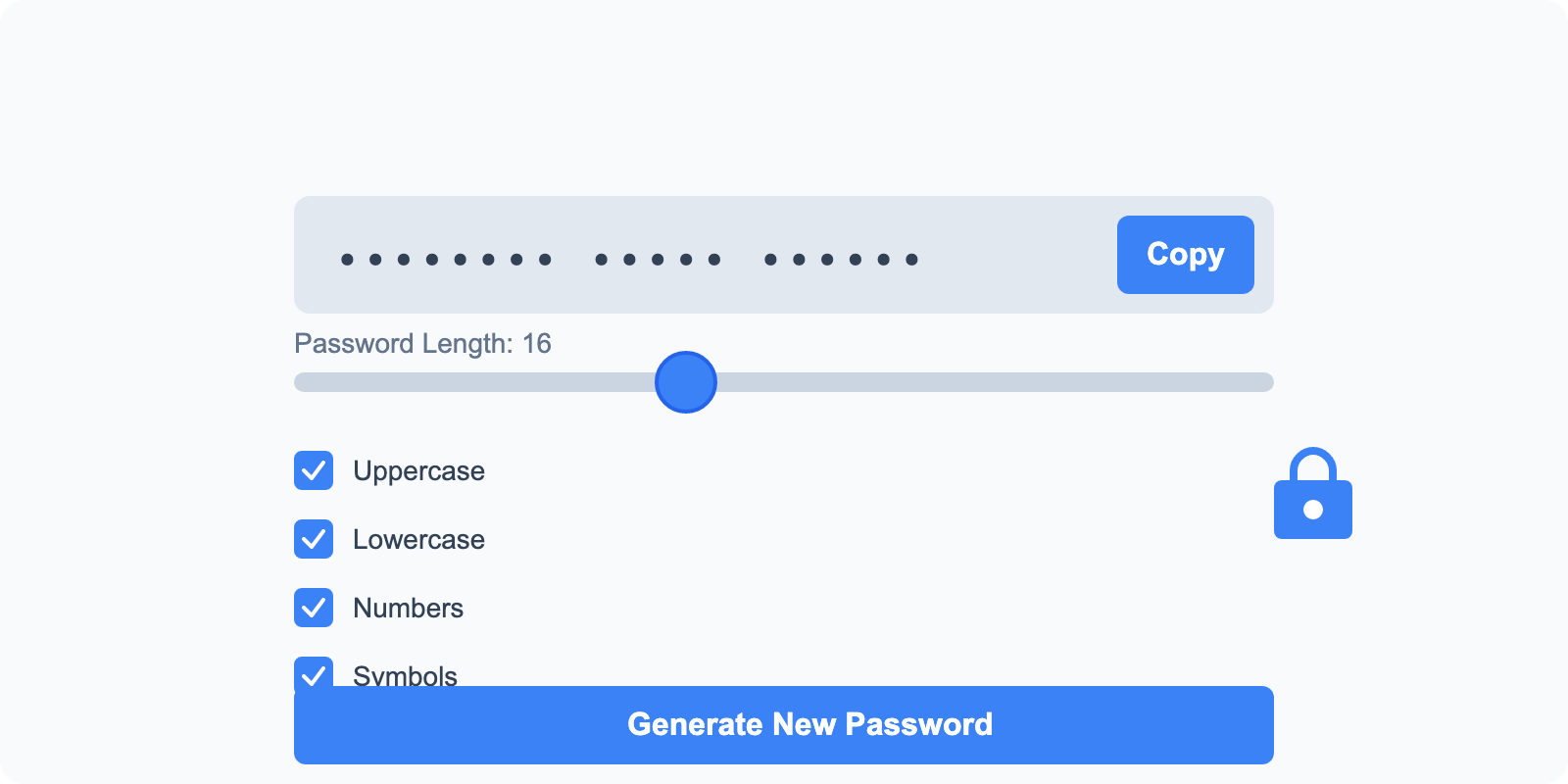

Password Generator

Generate secure passwords with custom requirements.